Here’s an excerpt from occult author Erik Davis’ Burning Shore newsletter:

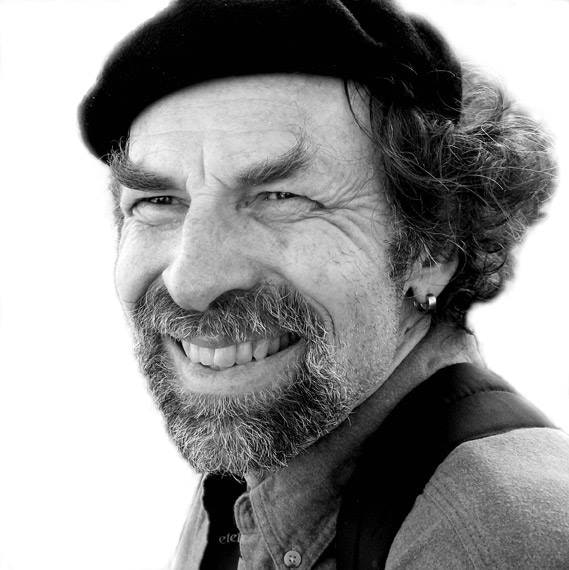

It was Dale Pendell’s birthday recently, which gave those of us who knew the man a reason to recall both his excellence and his death just a few years back. I was blessed to have broken bread with the guy, but I could have broken more.

For those who haven’t had the pleasure, Dale was a poet, scholar, herbalist, programmer, bibliophile, and dharma bum whose skill set incarnated a particularly Californian articulation of mystic pragmatism. In addition to many books of poetry, a fine Sci-Fi dystopia (The Great Bay, 2010) and a romantic essay on Burning Man (Inspired Madness, 2006), Pendell wrote some of the mightiest works on psychoactive drugs of his or any generation. As I discussed in a long-ago Bookforum essay, Pendell’s kaleidoscopic Pharmako series —Pharmako/Poeia (1995), Pharmako/Dynamis (2002), and Pharmako/Gnosis (2005) — weave together poetry, history, religion, politics, and pharmacology into an extended meditation on the Poison Path, which Dale describes as a “vegetable alchemy” that combines rigorous and informed self-experimentation with a street-smart sorcery that risks “the seduction of angels.”

Given their polymathic reach, Dale’s Pharmako books are pedagogical wonders, perfect for a moment like now, when so many newbs are turning on. But I fear that, like the writings of so many heterodox bohemians and intellectuals, Pendell’s works are being forgotten, if not actively pulped. Today’s psychedelic discourse is largely a legitimation operation, not a literary one, a process of professionalization driven by turf wars, Instagram “experts,” colonizing therapy talk, and the profane hype of capital. Outside the margins — which still exist, fear not — there is little room for weirdness, or poetry, or the simultaneously pagan and posthuman implications of pharmacological symbiosis.

But fear not, my fellow friends and wizards and ontological midwives: like the resinous spunk of a psychoactive Artemisia, Dale’s spirit infuses Pharmako-AI (Ignota Books, 2020), which is not only one of the most provocative books I have read in a while, but may well come to be seen — at least if techgnostics like me have their say — as an epochal opening move in the 21st century’s Great Game of human-computer communion. The book lists K Allado-McDowell as its sole author, but the reality is more complicated, for Allado-McDowell handed off the generation of the bulk of its pages to a shockingly clever natural language processing system known as GPT-3. Though not the first book to be written largely by algorithm, Pharmako-AI is no doubt the most oracular.

GPT-3, which stands for Generative Pre-trained Transformer 3, was given a controlled release last year by the San Francisco company Open AI. The language model, as such systems are called, makes extraordinarily good guesses about the next token (word or number) in a given sequence. Given a very short initial prompt, just a few words or so, GPT-3 is capable of generating an entire short story, with believable dialogue and a contemporary tang. The guesses it makes are based on a collection of training parameters that vastly outnumber previous models, and that in turn required a gargantuan “pre-training” data set, which in GPT-3’s case included Wikipedia, popular links on reddit, the booty from eight years of web crawling, and a pile of digitized books eight miles high (at least as I imagine it).

In one of those ouroboric, snake-biting-its-own-tail loops that characterize technological power today, Open AI researchers warned about the dangers of GPT-3 in the very paper that announced its arrival. GPT-3 is pretty good at generating fake news, fooling over fifty percent of readers in one informal study, and like many Internet-fed NLP machines it excels at racist bile. Companies thirsty to automate engagement with the public should remember that, for all its apparent smarts, GPT-3 does not know how the world works. It knows how to put language together, which is not the same thing. During a try-out for its possible use as a medical chatbot, GPT-3 suggested to one simulated querent that they should probably just go ahead and kill themselves.

subscribe to Erik’s newsletter:

https://www.burningshore.com/subscribe?utm_source=substack&utm_medium=email&utm_content=postcta.